Oct 24th, 2023 Logitech G Pro X Superlight 2 Review.Oct 27th, 2023 Intel Core i9-14900K Raptor Lake Tested at Power Limits Down to 35 W.Oct 26th, 2023 Alan Wake 2 Performance Benchmark Review - 30 GPUs Tested.Dec 9th 2022 NVIDIA GeForce RTX 4070 and RTX 4070 Ti Detailed Specs Sheet Leaks (93)Īdd your own comment 2 Comments on NVIDIA H100 Compared to A100 for Training GPT Large Language Models #1 kondamin.Jul 19th 2023 NVIDIA GeForce RTX 4060 Ti 16GB Review-Not (71).Oct 23rd 2023 NVIDIA GeForce RTX 4080 SUPER to Feature 20GB Memory, Based on AD102 (145).Oct 17th 2023 NVIDIA Readies GeForce RTX 4070 SUPER, RTX 4070 Ti SUPER, and RTX 4080 SUPER (76).May 9th 2023 NVIDIA GeForce RTX 4060 Ti Available as 8 GB and 16 GB, This Month.Jun 26th 2023 More Pictures of NVIDIA's Cinder Block-sized RTX 4090 Ti Cooler Surface (145).Apr 17th 2023 NVIDIA to Target $450 Price-point with GeForce RTX 4060 Ti (237).Jan 28th 2023 NVIDIA RTX 4090 Ti / RTX TITAN (Ada) Pictured, Behold the 4-slot Cinder Block (193).Aug 22nd 2023 NVIDIA Announces DLSS 3.5 Ray Reconstruction Technology, Works on GeForce 20 and Newer (89).Aug 21st 2023 NVIDIA BIOS Signature Lock Broken, vBIOS Modding and Crossflash Enabled by Groundbreaking New Tools (170).Below, you can see tables of comparison between two GPUs in training time, speedup, and cost of training. This inherently makes H100 more attractive for researchers and companies wanting to train Large Language Models (LLMs) and makes choosing the newer GPU more viable, despite the increased cost. To run the MLPerf Inference benchmarks on a MIG-enabled system under test, do the following: Add MIG details in the inference configuration file: Figure 2- Example configuration for running inferences using MIG enabled VMs. While the H100 is 2.2x more expensive, the performance makes it up, resulting in less time to train a model and a lower price for the training process. When the system has been configured, configure MLPerf v1.1 on the MIG VMs. CoreWeave prices the H100 SXM GPUs at $4.76/hr/GPU, while the A100 80 GB SXM gets $2.21/hr/GPU pricing. However, an interesting finding emerges when comparing the cost of running these GPUs in the cloud. Regarding performance, the NVIDIA H100 GPU achieved anywhere from 2.2x to 3.3x speedup. All training occurred on CoreWeave cloud GPU instances. Firstly, MosaicML has taken Generative Pre-trained Transformer (GPT) models of various sizes and trained them using bfloat16 and FP8 Floating Point precision formats.

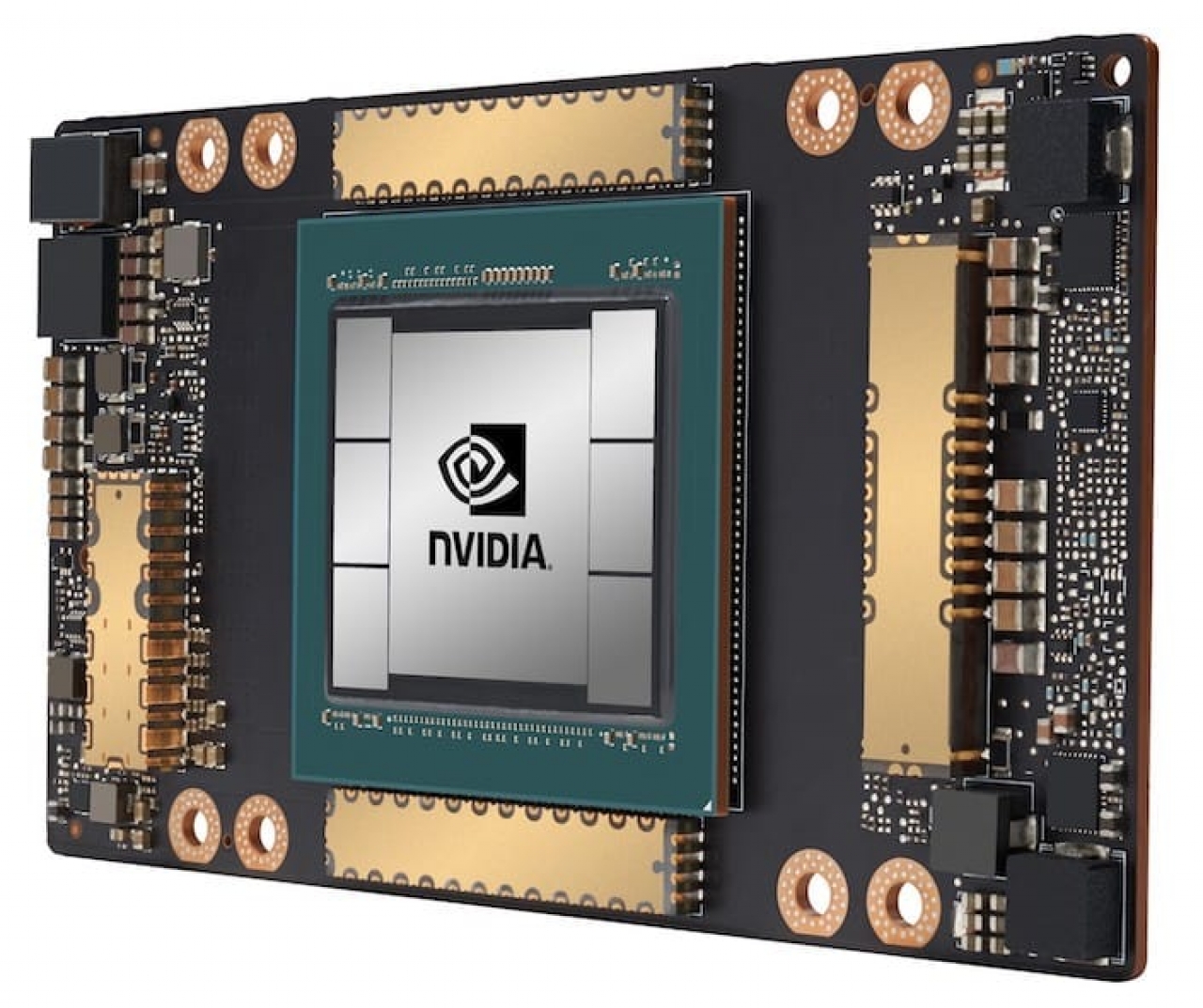

Today, thanks to the benchmarks of MosaicML, a startup company led by the ex-CEO of Nervana and GM of Artificial Intelligence (AI) at Intel, Naveen Rao, we have some comparison between these two GPUs with a fascinating insight about the cost factor. NVIDIA's H100 has recently become available to use via Cloud Service Providers (CSPs), and it was only a matter of time before someone decided to benchmark its performance and compare it to the previous generation's A100 GPU.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed